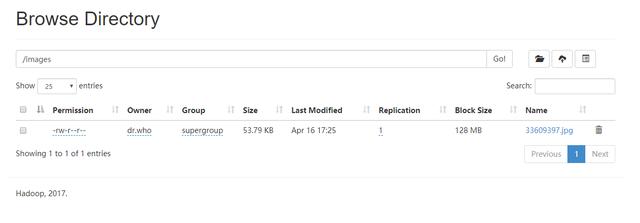

hdfs后台上传文件

HDFS后台端口由 50070 变为9870:

上传一个图片,hdfs地址为:hdfs://localhost:9000/images/33609397.jpg

maven依赖

我使用的是Hadoop3.0.0,所以依赖是

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs</artifactId>

<version>3.0.0</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs-client</artifactId>

<version>3.0.0</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-common</artifactId>

<version>3.0.0</version>

</dependency>

测试代码

public class ReadHdfsFileTest {

public static void main(String[] args) throws IOException {

Path path= new Path("hdfs://localhost:9000/images/33609397.jpg");

//加载配置文件

Configuration conf = new Configuration();

FileSystem fs = path.getFileSystem(conf);

FSDataInputStream is = fs.open(path);

int available = is.available();

System.out.println(available);

fs.close();

}

}

报错

Exception in thread "main" org.apache.hadoop.fs.UnsupportedFileSystemException: No FileSystem for scheme "hdfs"

at org.apache.hadoop.fs.FileSystem.getFileSystemClass(FileSystem.java:3266)

at org.apache.hadoop.fs.FileSystem.createFileSystem(FileSystem.java:3286)

at org.apache.hadoop.fs.FileSystem.access$200(FileSystem.java:123)

at org.apache.hadoop.fs.FileSystem$Cache.getInternal(FileSystem.java:3337)

at org.apache.hadoop.fs.FileSystem$Cache.get(FileSystem.java:3305)

at org.apache.hadoop.fs.FileSystem.get(FileSystem.java:476)

at org.apache.hadoop.fs.Path.getFileSystem(Path.java:361)

at hdfs.ReadHdfsFileTest.main(ReadHdfsFileTest.java:21)

其实是缺少类:DistributedFileSystem.class

Hadoop3.0.0已经将这个类从common移动到hadoop-hdfs-client,所以maven新增依赖就可以

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-hdfs-client</artifactId>

<version>3.0.0</version>

</dependency>

运行成功

打印结果:

log4j:WARN See #noconfig for more info.

55076