2.1.1 摘要

《 kubernetes 权威指南:从Docker到Kubernetes实践全接触(第4版)》第2章 Kubernetes安装配置指南的实践篇。书中内容写的比较简洁,本案例把遇到的各种情况和解决方案都明确列出来了。

2.1.3 实践内容

2.1.3.1 k8s集群规划

集群 NODE 节点分配:

(1) k8s-master 内网IP:192.168.0.3 公网IP: 114.67.107.240 数据目录:/data/k8s

(2) k8s-node1 内网IP:192.168.0.3 公网IP: 114.67.110.126 数据目录:/root/k8s

(3) k8s-node2 内网IP:192.168.0.5 公网IP: 114.67.107.226 数据目录:/data/k8s

HOST配置:

$ vim /etc/hosts

# 增加以下配置,消除 sudo: unable to resolve host 告警信息

# 127.0.0.1 k8s-master

$sudo hostnamectl set-hostname k8s-master #在k8s-master 上执行 IP:114.67.107.240

$ vim /etc/hosts

# 增加以下配置,消除 sudo: unable to resolve host 告警信息

# 127.0.0.1 k8s-node1

$sudo hostnamectl set-hostname k8s-node1 #k8s-node1 上执行 IP:114.67.110.126

$ vim /etc/hosts

# 增加以下配置,消除 sudo: unable to resolve host 告警信息

# 127.0.0.1 k8s-node2

$sudo hostnamectl set-hostname k8s-node2 #k8s-node2 上执行 IP: 114.67.107.226

# 可查看

$ hostnamectl

2.1.3.2 环境配置

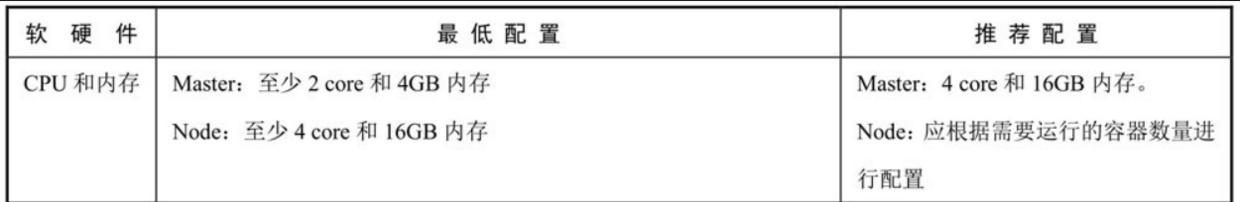

(1)环境要求

硬件配置要求

操作系统:Ubuntu 18.04.1 LTS

内核:4.15.0-36-generic

(2)准备工作

[1] 禁用开机启动防火墙

关闭ufw防火墙,Ubuntu默认未启用,无需设置。

sudo ufw disable

systemctl stop firewalld

[2] 永久禁用 SELINUX

ubuntu默认不安装 selinux ,假如安装了的话,按如下步骤禁用selinux

永久禁用

$ sudo vi /etc/selinux/config

SELINUX=permissive

说明:

[3] 开启数据包转发

修改/etc/sysctl.conf,开启 ipv4 转发:

$ sudo vim /etc/sysctl.conf

net.ipv4.ip_forward = 1 #开启ipv4转发,允许内置路由

写入后执行如下命令生效:

$ sudo sysctl -p

备注:

什么是ipv4转发:出于安全考虑,Linux系统默认是禁止数据包转发的。转发即当主机拥有多于一块的网卡时,其中一块收到数据包,根据数据包的目的ip地址将数据包发往本机另一块网卡,该网卡根据路由表继续发送数据包。这通常是路由器所要实现的功能。

kube-proxy的ipvs模式和calico(都涉及路由转发)都需要主机开启ipv4转发。

另外,不使用k8s,即使只使用docker的时候,以下两种情况也依赖ipv4转发:

<1>当同一主机上的两个跨bridge(跨bridge相当于跨网段,跨网络需要路由)的容器互访

<2>从容器内访问外部

[4] 防火墙修改FORWARD链默认策略

数据包经过路由后,假如不是发往本机的流量,下一步会走iptables的FORWARD链,而docker从1.13版本开始,将FORWARD链的默认策略设置为DROP,会导致出现一些例如跨主机的两个pod使用podIP互访失败等问题。解决方案有2个:

在所有节点上开机启动时执行 iptables -P FORWARD ACCEPT

让docker不操作iptables

方案一 临时生效:

$ sudo iptables -P FORWARD ACCEPT

iptables的配置重启后会丢失,可以将配置写进/etc/rc.local中,重启后自动执行:

/usr/sbin/iptables -P FORWARD ACCEPT

方案二

设置docker启动参数添加–iptables=false选项,使docker不再操作iptables,比如1.10版以上可编辑docker daemon默认配置文件/etc/docker/daemon.json:

{

...

"iptables": false

...

}

备注:建议方案二

kubernetes官网建议和k8s结合的时候docker的启动参数设置–iptables=false使得docker不再操作iptables,完全由kube-proxy来操作iptables。

参考:

<1>#container-communication-between-hosts

<2>

<3>#docker

<4>

[5] 禁用swap

禁掉所有的 swap分区

$ sudo swapoff -a

同时还需要修改/etc/fstab文件,注释掉 SWAP 的自动挂载,防止机子重启后swap启用。

Kubernetes 1.8开始要求关闭系统的Swap,如果不关闭,默认配置下kubelet将无法启动,虽然可以通过kubelet的启动参数–fail-swap-on=false更改这个限制,但不建议,最好还是不要开启swap。

一些为什么要关闭swap的讨论:

<1>

<2>

[6] 配置iptables参数,使得流经网桥的流量也经过iptables/netfilter防火墙

$ sudo tee /etc/sysctl.d/k8s.conf <<-'EOF'

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

$ sudo sysctl --system

备注:

网络插件需要为kube-proxy提供一些特定的支持,比如kube-proxy的iptables模式基于iptables,网络插件就需要确保容器的流量可以流过iptables。比如一些网络插件会用到 网桥 ,而网桥工作在数据链路层,iptables/netfilter防火墙工作在网络层,以上配置则可以使得通过网桥的流量也进入iptables/netfilter防火墙中,确保iptables模式的kube-proxy可以正常工作。

默认没有指定kubelet网络插件的情况下,会使用noop插件,它也会设置net/bridge/bridge-nf-call-iptables=1来确保iptables模式的kube-proxy可以正常工作。

参考:

<1>#network-plugin-requirements

<2>

(3)安装docker

[1] 安装并启动docker

$ apt-get update

$ apt install docker.io

$ systemctl enable docker && systemctl start docker

$ systemctl status docker

[2] docker镜像源参数配置

为docker设置如下配置:

设置阿里云镜像库加速dockerhub的镜像。国内访问dockerhub不稳定,将对dockerhub的镜像拉取代理到Docker 中国官方镜像

$ vim /etc/docker/daemon.json

{

"registry-mirrors": ["#34;],

"iptables": false,

"ip-masq": false,

"storage-driver": "overlay2",

"graph": "/home/lk/docker"

}

$ sudo systemctl restart docker

(4)安装kubeadm、kubelet、kubectl

[1] 创建kubernetes的repo

创建kubernetes的source文件,google地址被墙的情况下可以使用阿里云或者 中科大 的镜像站:

sudo apt-get update && sudo apt-get install -y apt-transport-https curl

sudo curl -s | sudo apt-key add -

sudo tee /etc/apt/sources.list.d/kubernetes.list <<-'EOF'

deb kubernetes-xenial main

EOF

sudo apt-get update

[2] 安装kubeadm、kubelet、kubectl

1.查看可用软件版本:

$ apt-cache madison kubeadm

kubeadm | 1.21.3-00 | kubernetes-xenial/main amd64 Packages

kubeadm | 1.21.2-00 | kubernetes-xenial/main amd64 Packages

kubeadm | 1.21.1-00 | kubernetes-xenial/main amd64 Packages

kubeadm | 1.21.0-00 | kubernetes-xenial/main amd64 Packages

kubeadm | 1.20.9-00 | kubernetes-xenial/main amd64 Packages

kubeadm | 1.20.8-00 | kubernetes-xenial/main amd64 Packages

kubeadm | 1.20.7-00 | kubernetes-xenial/main amd64 Packages

kubeadm | 1.20.6-00 | kubernetes-xenial/main amd64 Packages

......

2.安装指定版本:

$ sudo apt-get install -y kubelet=1.20.5-00 kubeadm=1.20.5-00 kubectl=1.20.5-00

# 阻止自动更新(apt upgrade时忽略)。所以更新的时候先unhold,更新完再hold。

$ sudo apt-mark hold kubelet=1.20.5-00 kubeadm=1.20.5-00 kubectl=1.20.5-00

3.设置开机自启动并运行kubelet:

sudo systemctl enable kubelet && sudo systemctl start kubelet

备注:

此时kubelet的服务运行状态是异常的(因为缺少主配置文件kubelet.conf等,可以暂不处理,因为在完成Master节点的初始化后才会生成这个配置文件)

# systemctl status kubelet

● kubelet.service - kubelet: The Kubernetes Node Agent

Loaded: loaded (/lib/systemd/system/kubelet.service; enabled; vendor preset: enabled)

Drop-In: /etc/systemd/system/kubelet.service.d

└─10-kubeadm.conf

Active: active (running) since Thu 2021-07-29 15:51:07 CST; 55min ago

Docs:

Main PID: 20437 (kubelet)

Tasks: 27 (limit: 4915)

CGroup: /system.slice/kubelet.service

└─20437 /usr/bin/kubelet --bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.conf --kubeconfig=/etc/kubernetes/kubelet.conf --config=/var/lib/kubelet/config.yaml --network-plu

Jul 29 16:47:00 k8s-master kubelet[20437]: E0729 16:47:00.491624 20437 remote_runtime.go:116] RunPodSandbox from runtime service failed: rpc error: code = Unknown desc = failed to set up sa

Jul 29 16:47:00 k8s-master kubelet[20437]: E0729 16:47:00.491680 20437 kuberuntime_sandbox.go:70] CreatePodSandbox for pod "coredns-74ff55c5b-6rvx4_kube-system(bd28b427-0af4-434d-a170-416cf

Jul 29 16:47:00 k8s-master kubelet[20437]: E0729 16:47:00.491694 20437 kuberuntime_manager.go:755] createPodSandbox for pod "coredns-74ff55c5b-6rvx4_kube-system(bd28b427-0af4-434d-a170-416c

Jul 29 16:47:00 k8s-master kubelet[20437]: E0729 16:47:00.491742 20437 pod_workers.go:191] Error syncing pod bd28b427-0af4-434d-a170-416cf3ac871d ("coredns-74ff55c5b-6rvx4_kube-system(bd28b

Jul 29 16:47:00 k8s-master kubelet[20437]: W0729 16:47:00.636211 20437 pod_container_deletor.go:79] Container "c820e1b0c312b05ef5cdb247a5e0afdead74f026909df40a8a8782fc998b67b1" not found in

Jul 29 16:47:00 k8s-master kubelet[20437]: E0729 16:47:00.636368 20437 cni.go:366] Error adding kube-system_coredns-74ff55c5b-gcfjf/c820e1b0c312b05ef5cdb247a5e0afdead74f026909df40a8a8782fc9

Jul 29 16:47:00 k8s-master kubelet[20437]: W0729 16:47:00.638632 20437 docker_sandbox.go:402] failed to read pod IP from plugin/docker: networkPlugin cni failed on the status hook for pod "

Jul 29 16:47:00 k8s-master kubelet[20437]: W0729 16:47:00.642714 20437 pod_container_deletor.go:79] Container "7a3b54ba7a6c63e57621c06b8fd784120befa2327e99d85e670d94d1f85620a8" not found in

Jul 29 16:47:00 k8s-master kubelet[20437]: W0729 16:47:00.643960 20437 cni.go:333] CNI failed to retrieve network namespace path: cannot find network namespace for the terminated container

Jul 29 16:47:00 k8s-master kubelet[20437]: W0729 16:47:00.644073 20437 cni.go:333] CNI failed to retrieve network namespace path: cannot find network namespace for the terminated container

Jul 29 16:47:00 k8s-master kubelet[20437]: E0729 16:47:00.869338 20437 remote_runtime.go:116] RunPodSandbox from runtime service failed: rpc error: code = Unknown desc = failed to set up sa

Jul 29 16:47:00 k8s-master kubelet[20437]: E0729 16:47:00.869386 20437 kuberuntime_sandbox.go:70] CreatePodSandbox for pod "coredns-74ff55c5b-gcfjf_kube-system(50027ab9-037c-4a63-932a-a6bf3

Jul 29 16:47:00 k8s-master kubelet[20437]: E0729 16:47:00.869399 20437 kuberuntime_manager.go:755] createPodSandbox for pod "coredns-74ff55c5b-gcfjf_kube-system(50027ab9-037c-4a63-932a-a6bf

Jul 29 16:47:00 k8s-master kubelet[20437]: E0729 16:47:00.869440 20437 pod_workers.go:191] Error syncing pod 50027ab9-037c-4a63-932a-a6bf39a16e54 ("coredns-74ff55c5b-gcfjf_kube-system(50027

问题描述1 :

# systemctl status kubelet

● kubelet.service - kubelet: The Kubernetes Node Agent

Loaded: loaded (/lib/systemd/system/kubelet.service; enabled; vendor preset: enabled)

Drop-In: /etc/systemd/system/kubelet.service.d

└─10-kubeadm.conf

Active: activating (auto-restart) (Result: exit-code) since Mon 2021-08-16 09:59:06 CST; 2s ago

Docs:

Process: 22001 ExecStart=/usr/bin/kubelet $KUBELET_KUBECONFIG_ARGS $KUBELET_CONFIG_ARGS $KUBELET_KUBEADM_ARGS $KUBELET_EXTRA_ARGS (code=exited, status=255)

Main PID: 22001 (code=exited, status=255)

Aug 16 09:59:06 k8s-node2 systemd[1]: kubelet.service: Unit entered failed state.

Aug 16 09:59:06 k8s-node2 systemd[1]: kubelet.service: Failed with result 'exit-code'.

首先查看下kubelet的日志,日志记录的东西都是最详细的。

# journalctl -xeu kubelet | less

核心错误提示:

-- The start-up result is done.

Aug 16 09:58:52 k8s-node2 kubelet[21974]: F0816 09:58:52.200450 21974 server.go:198] failed to load Kubelet config file /var/lib/kubelet/config.yaml, error failed to read kubelet config file "/var/lib/kubelet/config.yaml", error: open /var/lib/kubelet/config.yaml: no such file or directory

Aug 16 09:58:52 k8s-node2 kubelet[21974]: goroutine 1 [running]:

这个一般是原有残留文件导致的join失败,删掉所有的相关文件、目录,重新来一遍试试。

sudo systemctl stop kubelet

sudo systemctl disable kubelet

sudo apt-get purge kubeadm

sudo apt-get purge kubectl

sudo apt-get purge kubelet

# 清理残留数据

dpkg -l |grep ^rc|awk '{print $2}' |sudo xargs dpkg -P

2.1.3.3 Kubernetes集群安装

2.1.3.3.1 master节点部署

[1] 镜像下载

1,提前下载所需镜像

看一下kubernetes v1.20.5需要哪些镜像:

$ kubeadm config images list --kubernetes-version=v1.20.5

k8s.gcr.io/kube-apiserver:v1.20.5

k8s.gcr.io/kube-controller-manager:v1.20.5

k8s.gcr.io/kube-scheduler:v1.20.5

k8s.gcr.io/kube-proxy:v1.20.5

k8s.gcr.io/pause:3.2

k8s.gcr.io/etcd:3.4.13-0

k8s.gcr.io/coredns:1.7.0

由于gcr.io被墙,查看了下的镜像挺全的。

docker pull v5cn/kube-apiserver:v1.20.5

docker pull v5cn/kube-controller-manager:v1.20.5

docker pull v5cn/kube-scheduler:v1.20.5

docker pull v5cn/kube-proxy:v1.20.5

docker pull v5cn/pause:3.2

docker pull v5cn/etcd:3.4.13-0

docker pull v5cn/coredns:1.7.0

2.重新打回k8s.gcr.io的镜像tag:

docker tag v5cn/kube-apiserver:v1.20.5 k8s.gcr.io/kube-apiserver:v1.20.5

docker tag v5cn/kube-controller-manager:v1.20.5 k8s.gcr.io/kube-controller-manager:v1.20.5

docker tag v5cn/kube-scheduler:v1.20.5 k8s.gcr.io/kube-scheduler:v1.20.5

docker tag v5cn/kube-proxy:v1.20.5 k8s.gcr.io/kube-proxy:v1.20.5

docker tag v5cn/pause:3.2 k8s.gcr.io/pause:3.2

docker tag v5cn/etcd:3.4.13-0 k8s.gcr.io/etcd:3.4.13-0

docker tag v5cn/coredns:1.7.0 k8s.gcr.io/coredns:1.7.0

[2] kubeadm init初始化集群

<1> 配置文件方式启动

执行kubeadm config print init-defaults,可以取得默认的初始化参数文件:

kubeadm config print init-defaults --kubeconfig ClusterConfiguration > kubeadm.yml

配置文件kubeadm.yml:

配置关键点 :

- advertiseAddress:114.67.107.240,bindPort: 6443

- kubernetesVersion: v1.20.5

- serviceSubnet: 10.96.0.0/12 //不需要跟实际网络一致,采用默认即可

- podSubnet: 10.244.0.0/16 //不需要跟实际网络一致,采用默认即可

apiVersion: kubeadm.k8s.io/v1beta2

bootstrapTokens:

- groups:

- system:bootstrappers:kubeadm:default-node-token

token: abcdef.0123456789abcdef

ttl: 24h0m0s

usages:

- signing

- authentication

kind: InitConfiguration

localAPIEndpoint:

advertiseAddress: 114.67.107.240

bindPort: 6443

nodeRegistration:

criSocket: /var/run/dockershim.sock

name: k8s-master

taints:

- effect: NoSchedule

key: node-role.kubernetes.io/master

---

apiServer:

timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta2

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager: {}

dns:

type: CoreDNS

etcd:

local:

dataDir: /var/lib/etcd

imageRepository: k8s.gcr.io

kind: ClusterConfiguration

kubernetesVersion: v1.20.5

networking:

dnsDomain: cluster.local

serviceSubnet: 10.96.0.0/12

podSubnet: 10.244.0.0/16

scheduler: {}

初始化集群:

kubeadm init --config=kubeadm.yml

运行成功的结果:

$ kubeadm init --config=kubeadm.yml

[init] Using Kubernetes version: v1.20.5

[preflight] Running pre-flight checks

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.2. Latest validated version: 19.03

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

...

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 114.67.107.240:6443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:99ea8c7fbd529eafe466f7b5ac403b896c700746a2d16178c5748927f04680db

问题说明:

(1)如果原来有集群配置,需要重置,则运行重启集群,所有的image都需要重新下载。请参考[7]操作。

(2)遇到告警:

[preflight] Running pre-flight checks

[WARNING IsDockerSystemdCheck]: detected "cgroupfs" as the Docker cgroup driver. The recommended driver is "systemd". Please follow the guide at

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.2. Latest validated version: 19.03

执行:

$ Setup daemon.

cat > /etc/docker/daemon.json <<EOF

{

"exec-opts": ["native.cgroupdriver=systemd"],

"insecure-registries":["192.168.0.3:8080"]

}

EOF

mkdir -p /etc/systemd/system/docker.service.d

#我顺便吧docker的私有仓库也加在里面

# Restart docker.

systemctl daemon-reload

systemctl restart docker

查看 Docker 使用的 cgroup driver:

# docker info | grep -i cgroup

Cgroup Driver: systemd

WARNING: No swap limit support

Cgroup Version: 1

可以看出docker 20.10.2的Cgroup Driver已改为systemd了。

(3)如果遇到port 10251 and 10252 are in use 错误请执行 netstat -lnp | grep 1025 然后kill 进程ID

(4)云主机/ECS下kubeadm部署k8s无法指定公网IP,导致集群初始化失败。

京东云主机或者阿里云下,运行master的kubeadm init,指定公网IP 114.67.107.240 为advertiseAddress,出现集群启动失败。

失败打印:

...

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[kubelet-check] Initial timeout of 40s passed.

Unfortunately, an error has occurred:

timed out waiting for the condition

This error is likely caused by:

- The kubelet is not running

- The kubelet is unhealthy due to a misconfiguration of the node in some way (required cgroups disabled)

If you are on a systemd-powered system, you can try to troubleshoot the error with the following commands:

- 'systemctl status kubelet'

- 'journalctl -xeu kubelet'

Additionally, a control plane component may have crashed or exited when started by the container runtime.

To troubleshoot, list all containers using your preferred container runtimes CLI.

Here is one example how you may list all Kubernetes containers running in docker:

- 'docker ps -a | grep kube | grep -v pause'

Once you have found the failing container, you can inspect its logs with:

- 'docker logs CONTAINERID'

error execution phase wait-control-plane: couldn't initialize a Kubernetes cluster

To see the stack trace of this error execute with --v=5 or higher

解决方法: 修改etcd.yaml

在输入上述命令后,kubeadm即开始了master节点的初始化,但是由于etcd配置文件不正确,所以etcd无法启动,要对该文件进行修改。

$ vim /etc/kubernetes/manifests/etcd.yaml

修改前:

- --listen-client-urls=

- --listen-metrics-urls=

- --listen-peer-urls=

修改后:

- --listen-client-urls=

- --listen-metrics-urls=

- --listen-peer-urls=

稍等片刻之后,master节点就初始化好了。

<2> 命令文件方式启动 -未测试

sudo kubeadm init --apiserver-advertise-address=192.168.0.3 --pod-network-cidr=10.244.0.0/16 --service-cidr=10.96.0.0/12 --kubernetes-version=v1.20.5

[3] 检查kubelet使用的cgroup driver

kubelet启动时指定的cgroup driver需要和docker所使用的保持一致。

1.查看 Docker 使用的 cgroup driver:

$ docker info | grep -i cgroup

Cgroup Driver: systemd

WARNING: No swap limit support

Cgroup Version: 1

可以看出docker 20.10.2默认使用的Cgroup Driver已为systemd。

[4] 创建kubectl使用的kubeconfig文件

为了使得kubectl控制集群,需要做

对于非root用户:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

对于root用户:

export KUBECONFIG=/etc/kubernetes/admin.conf

[5] 设置master参与工作负载

kubeadm在Master上也安装了kubelet,在默认情况下并不参与工作负载。如果希望安装一个单机All-In-One的Kubernetes环境,则可以执行下面的命令(删除Node的Label“node-role.kubernetes.io/master”),让Master成为一个Node:

$kubectl taint nodes --all node-role.kubernetes.io/master-

输出:

node/k8s-master untainted

【说明】查询nodes节点信息,出现错误

# kubectl get nodes

Unable to connect to the server: x509: certificate is valid for 47.117.64.1, 172.19.23.53, not 47.117.67.43

查看发现,kubeadm reset时没有删除.kube目录下的文件,这里手动删除,然后再按上述步骤执行一遍,即可解决问题。

cd $HOME

ls -a

rm -rf .kube

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

# 查看pods和nodes信息

root@k8s-master:~## kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

default curl 0/1 Pending 0 168m

kube-system coredns-74ff55c5b-6rvx4 0/1 ContainerCreating 0 3h55m

kube-system coredns-74ff55c5b-gcfjf 0/1 ContainerCreating 0 3h55m

kube-system etcd-k8s-master 1/1 Running 0 3h55m

kube-system kube-apiserver-k8s-master 1/1 Running 0 3h55m

kube-system kube-controller-manager-k8s-master 1/1 Running 0 3h28m

kube-system kube-flannel-ds-wq8k4 0/1 CrashLoopBackOff 39 155m

kube-system kube-proxy-bf669 1/1 Running 0 3h55m

kube-system kube-scheduler-k8s-master 1/1 Running 0 170m

kube-system kuboard-74c645f5df-bgctl 0/1 ContainerCreating 0 77m

root@k8s-master:~# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready control-plane,master 3h39m v1.20.5

如果发现有状态错误的Pod,则可以执行kubectl –namespace=kube-system describepod<pod_name>来查看错误原因,常见的错误原因是镜像没有下载完成。

$kubectl describe pod kube-flannel-ds-wq8k4 --namespace=kube-system

...

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Warning BackOff 79s (x432 over 96m) kubelet Back-off restarting failed container

查看contailer的Log:

$docker ps -a

...

eab06e3325ec 8522d622299c "/opt/bin/flanneld -…" 2 minutes ago Exited (1) 2 minutes ago k8s_kube-flannel_kube-flannel-ds-wq8k4_kube-system_68626880-c87a-4dbd-926d-f889aa9bbfb7_41

docker logs -t -f 051e74608f16

docker logs -t -f k8s_kube-flannel_kube-flannel-ds-wq8k4_kube-system_68626880-c87a-4dbd-926d-f889aa9bbfb7_41

[6] 查看一下集群状态

确认个组件都处于healthy状态:

$ kubectl get cs

Warning: v1 ComponentStatus is deprecated in v1.19+

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-0 Healthy {"health":"true"}

$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master NotReady control-plane,master 59m v1.20.5

# 可以看到其中生成了名为kubeadm-config的ConfigMap对象

$kubectl get -n kube-system configmap

NAME DATA AGE

coredns 1 3h10m

extension-apiserver-authentication 6 3h10m

kube-flannel-cfg 2 110m

kube-proxy 2 3h10m

kube-root-ca.crt 1 3h10m

kubeadm-config 2 3h10m

kubelet-config-1.20 1 3h10m

# 查看pds状态

$kubectl get pods --all-namespaces

s# kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-74ff55c5b-pdrpb 0/1 ContainerCreating 0 12h

kube-system coredns-74ff55c5b-tfcbn 0/1 ContainerCreating 0 12h

kube-system etcd-k8s-master 1/1 Running 0 12h

kube-system kube-apiserver-k8s-master 1/1 Running 0 12h

kube-system kube-controller-manager-k8s-master 1/1 Running 0 12h

kube-system kube-flannel-ds-w5f88 0/1 CrashLoopBackOff 146 12h

kube-system kube-proxy-p9sxm 1/1 Running 0 12h

kube-system kube-scheduler-k8s-master 1/1 Running 0 12h

说明:

(1) 超时

$ kubectl get cs

Unable to connect to the server: dial tcp 47.117.67.43:6443: i/o timeout

这儿是访问外网IP,该端口在安全组未打开。

(2)拒绝

scheduler Unhealthy Get "#34;: dial tcp 127.0.0.1:10251: connect: connection refused

出现这种情况是kube-controller-manager.yaml和kube-scheduler.yaml设置的默认端口是0,在文件中注释掉就可以了。(每台master节点都要执行操作)

vim /etc/kubernetes/manifests/kube-controller-manager.yaml

vim /etc/kubernetes/manifests/kube-scheduler.yaml

# 注释掉port=0这一行

# - --port=0

#所有节点重启kubelet

systemctl restart kubelet.service

(3)the server localhost:8080 was refused

关闭XSHELL,输入出现报错。

$ kubectl get cs

The connection to the server localhost:8080 was refused - did you specify the right host or port?

出现这个问题的原因是kubectl命令需要使用kubernetes-admin来运行,解决方法如下,将主节点中的【/etc/kubernetes/admin.conf】文件拷贝到从节点相同目录下,然后配置环境变量:

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

source ~/.bash_profile

[7] 安装失败,重新安装

如果安装失败,则可以执行kubeadm reset命令将主机恢复原状,重新执行kubeadm init命令,再次进行安装。

$ kubeadm reset

然后手工删除文件:

$ rm -rf $HOME/.kube

然后从[1]开始重新执行。

2.1.3.3.2 flannel网络部署

calico部署会同时部署cni插件以及calico组件两部分,而flannel的部署只会初始化一些cni的配置文件,并不会部署cni的可执行文件,需要手动部署,所以flannel部署分为两步:

- CNI插件部署

- flannel组件部署

先在master节点安装flannel网络配置。

[1] CNI插件部署(所有节点)

1.创建cni插件目录

sudo mkdir -p /opt/cni/bin

cd /opt/cni/bin

2.到release页面下载二进制文件

$ sudo wget -c

说明:

github.com连接不上,改为国内镜像地址访问。

提供两个最常用的镜像地址:

3.在/opt/cni/bin目录下解压即安装好

sudo tar -zxvf cni-plugins-linux-amd64-v0.9.1.tgz

添加了如下插件:

$ ll /opt/cni/bin

total 111480

-rwxr-xr-x 1 root root 4151672 Feb 5 23:42 bandwidth*

-rwxr-xr-x 1 root root 4536104 Feb 5 23:42 bridge*

-rw-r--r-- 1 root root 363874 Jul 29 15:13 cni-plugins-linux-amd64-v0.9.1.tgz

-rw-r--r-- 1 root root 39771622 Feb 8 18:14 cni-plugins-linux-amd64-v0.9.1.tgz.1

-rwxr-xr-x 1 root root 10270090 Feb 5 23:42 dhcp*

-rwxr-xr-x 1 root root 4767801 Feb 5 23:42 firewall*

-rwxr-xr-x 1 root root 3357992 Feb 5 23:42 flannel*

-rwxr-xr-x 1 root root 4144106 Feb 5 23:42 host-device*

-rwxr-xr-x 1 root root 3565330 Feb 5 23:42 host-local*

-rwxr-xr-x 1 root root 4288339 Feb 5 23:42 ipvlan*

-rwxr-xr-x 1 root root 3530531 Feb 5 23:42 loopback*

-rwxr-xr-x 1 root root 4367216 Feb 5 23:42 macvlan*

-rwxr-xr-x 1 root root 3966455 Feb 5 23:42 portmap*

-rwxr-xr-x 1 root root 4467317 Feb 5 23:42 ptp*

-rwxr-xr-x 1 root root 3701138 Feb 5 23:42 sbr*

-rwxr-xr-x 1 root root 3153330 Feb 5 23:42 static*

-rwxr-xr-x 1 root root 3668289 Feb 5 23:42 tuning*

-rwxr-xr-x 1 root root 4287972 Feb 5 23:42 vlan*

-rwxr-xr-x 1 root root 3759977 Feb 5 23:42 vrf*

[2] flannel部署

1.获取yaml文件

curl -O

这里注意kube-flannel.yml这个文件里的flannel的镜像是v0.14.0,image: quay.io/coreos/flannel:v0.14.0

2.修改配置文件

<1>修改其中net-conf.json中的Network参数使其与kubeadm init时指定的–pod-network-cidr保持一致。

net-conf.json: |

{

"Network": "10.244.0.0/16",

"Backend": {

"Type": "vxlan"

}

}

3.镜像下载

下载yaml文件中所需镜像。

docker pull quay.io/coreos/flannel:v0.14.0

4.部署

$ kubectl apply -f kube-flannel.yml

podsecuritypolicy.policy/psp.flannel.unprivileged created

clusterrole.rbac.authorization.k8s.io/flannel created

clusterrolebinding.rbac.authorization.k8s.io/flannel created

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created

部署好后集群可以正常运行了。

问题说明:

<1> 假如网络部署失败或出问题需要重新部署,执行以下内容清除生成的网络接口:

sudo ifconfig cni0 down

sudo ip link delete cni0

sudo ifconfig flannel.1 down

sudo ip link delete flannel.1

sudo rm -rf /var/lib/cni/

<2> node 节点出现问题: cni.go:239] Unable to update cni config: no networks found in /etc/cni/net.d

问题描述:

# systemctl status kubelet

● kubelet.service - kubelet: The Kubernetes Node Agent

Loaded: loaded (/lib/systemd/system/kubelet.service; enabled; vendor preset: enabled)

Drop-In: /etc/systemd/system/kubelet.service.d

└─10-kubeadm.conf

Active: active (running) since Mon 2021-08-16 12:46:35 CST; 1h 45min ago

Docs:

Main PID: 27092 (kubelet)

Tasks: 14

Memory: 40.6M

CPU: 1min 22.226s

CGroup: /system.slice/kubelet.service

└─27092 /usr/bin/kubelet --bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.conf --kubeconfig=/etc/kubernetes/kubelet.conf --config=/var/lib/kubelet/config.yaml --network-plugin=cni --pod-infra

Aug 16 14:31:33 k8s-node2 kubelet[27092]: E0816 14:31:33.642859 27092 kubelet.go:2188] Container runtime network not ready: NetworkReady=false reason:NetworkPluginNotReady message:docker: network plugin is no

Aug 16 14:31:36 k8s-node2 kubelet[27092]: W0816 14:31:36.584036 27092 cni.go:239] Unable to update cni config: no networks found in /etc/cni/net.d

Aug 16 14:31:38 k8s-node2 kubelet[27092]: E0816 14:31:38.644359 27092 kubelet.go:2188] Container runtime network not ready: NetworkReady=false reason:NetworkPluginNotReady message:docker: network plugin is no

Aug 16 14:31:41 k8s-node2 kubelet[27092]: W0816 14:31:41.584177 27092 cni.go:239] Unable to update cni config: no networks found in /etc/cni/net.d

Aug 16 14:31:43 k8s-node2 kubelet[27092]: E0816 14:31:43.135195 27092 remote_runtime.go:116] RunPodSandbox from runtime service failed: rpc error: code = Unknown desc = failed pulling image "k8s.gcr.io/pause:

Aug 16 14:31:43 k8s-node2 kubelet[27092]: E0816 14:31:43.135251 27092 kuberuntime_sandbox.go:70] CreatePodSandbox for pod "kube-proxy-t2d2g_kube-system(3b90986c-f095-4e8b-ada0-03fd420aea3a)" failed: rpc error

Aug 16 14:31:43 k8s-node2 kubelet[27092]: E0816 14:31:43.135267 27092 kuberuntime_manager.go:755] createPodSandbox for pod "kube-proxy-t2d2g_kube-system(3b90986c-f095-4e8b-ada0-03fd420aea3a)" failed: rpc erro

Aug 16 14:31:43 k8s-node2 kubelet[27092]: E0816 14:31:43.135321 27092 pod_workers.go:191] Error syncing pod 3b90986c-f095-4e8b-ada0-03fd420aea3a ("kube-proxy-t2d2g_kube-system(3b90986c-f095-4e8b-ada0-03fd420a

Aug 16 14:31:43 k8s-node2 kubelet[27092]: E0816 14:31:43.645665 27092 kubelet.go:2188] Container runtime network not ready: NetworkReady=false reason:NetworkPluginNotReady message:docker: network plugin is no

Aug 16 14:31:46 k8s-node2 kubelet[27092]: W0816 14:31:46.584316 27092 cni.go:239] Unable to update cni config: no networks found in /etc/cni/net.d

然后运行按照kubectl apply -f kube-flannel.yml时出现提示:

root@k8s-node2:/data/k8s# kubectl apply -f kube-flannel.yml

W0816 14:31:55.914180 4255 loader.go:223] Config not found: /etc/kubernetes/admin.conf

The connection to the server localhost:8080 was refused - did you specify the right host or port?

还有错误:

RunPodSandbox from runtime service failed: rpc error: code = Unknown desc = failed pulling image "k8s.gcr.io/pause:

解决方法:

- 复制admin.conf文件

由于报错是找不到admin.conf文件,所以从主节点复制该文件到从节点.

scp /etc/kubernetes/admin.conf root@114.67.107.226:/etc/kubernetes/admin.conf

scp /etc/kubernetes/admin.conf root@114.67.110.126:/etc/kubernetes/admin.conf

- 新增环境变量

echo "export KUBECONFIG=/etc/kubernetes/admin.conf" >> ~/.bash_profile

source ~/.bash_profile

- pulling image “k8s.gcr.io/pause 表示拉取image失败。

参考2.1.3.3.1 [1] 镜像下载,重新镜像,打tag。

- 重新运行

kubectl apply -f kube-flannel.yml

5, kubectl get pod -n kube-system 确保所有的Pod都处于Running状态。

$ kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-74ff55c5b-6rvx4 0/1 ContainerCreating 0 88m

coredns-74ff55c5b-gcfjf 0/1 ContainerCreating 0 88m

etcd-k8s-master 1/1 Running 0 88m

kube-apiserver-k8s-master 1/1 Running 0 88m

kube-controller-manager-k8s-master 1/1 Running 0 61m

kube-flannel-ds-wq8k4 0/1 CrashLoopBackOff 6 8m23s

kube-proxy-bf669 1/1 Running 0 88m

kube-scheduler-k8s-master 1/1 Running 0 23m

问题描述:

kube-flannel-ds-wq8k4 0/1 CrashLoopBackOff 6 8m23s

[1] 查看docker日志, docker ps -a 查看可知,kube-flannel对应的CONTAINER ID为88bd276d0862。输入docker日志命令,如下:

s# docker logs 88bd276d0862

I0730 03:40:23.510106 1 main.go:520] Determining IP address of default interface

I0730 03:40:23.510398 1 main.go:533] Using interface with name eth0 and address 172.19.23.53

I0730 03:40:23.510412 1 main.go:550] Defaulting external address to interface address (172.19.23.53)

W0730 03:40:23.511160 1 client_config.go:608] Neither --kubeconfig nor --master was specified. Using the inClusterConfig. This might not work.

I0730 03:40:23.702527 1 kube.go:116] Waiting 10m0s for node controller to sync

I0730 03:40:23.702580 1 kube.go:299] Starting kube subnet manager

I0730 03:40:24.702664 1 kube.go:123] Node controller sync successful

I0730 03:40:24.702708 1 main.go:254] Created subnet manager: Kubernetes Subnet Manager - k8s-master

I0730 03:40:24.702713 1 main.go:257] Installing signal handlers

I0730 03:40:24.702814 1 main.go:392] Found network config - Backend type: vxlan

I0730 03:40:24.702883 1 vxlan.go:123] VXLAN config: VNI=1 Port=0 GBP=false Learning=false DirectRouting=false

E0730 03:40:24.704013 1 main.go:293] Error registering network: failed to acquire lease: node "k8s-master" pod cidr not assigned

I0730 03:40:24.704073 1 main.go:372] Stopping shutdownHandler...

6, 由于flannel需要调用内核模块,请确保这些模块已经安装完成.

加载ipvs相关内核模块

如果重新开机,需要重新加载(可以写在 /etc/rc.local 中开机自动加载)

$ modprobe ip_vs

$ modprobe ip_vs_rr

$ modprobe ip_vs_wrr

$ modprobe ip_vs_sh

$ modprobe nf_conntrack_ipv4

查看是否加载成功

$ lsmod | grep ip_vs

ip_vs_sh 12688 0

ip_vs_wrr 12697 0

ip_vs_rr 12600 0

ip_vs 145497 6 ip_vs_rr,ip_vs_sh,ip_vs_wrr

nf_conntrack 133095 9 ip_vs,nf_nat,nf_nat_ipv4,nf_nat_ipv6,xt_conntrack,nf_nat_masquerade_ipv4,nf_conntrack_netlink,nf_conntrack_ipv4,nf_conntrack_ipv6

libcrc32c 12644 4 xfs,ip_vs,nf_nat,nf_conntrack

2.1.3.3.3 slave节点部署

[1] 参考2.1.3.3.1完成环境配置

消除加入时告警“[WARNING IsDockerSystemdCheck]: detected “cgroupfs” as the Docker cgroup driver. The recommended driver is “systemd”. Please follow the guide at ”的方法:

# Setup daemon.

cat > /etc/docker/daemon.json <<EOF

{

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

mkdir -p /etc/systemd/system/docker.service.d

# Restart docker.

systemctl daemon-reload

systemctl restart docker

## 查看信息

$ docker info | grep -i cgroup

Cgroup Driver: systemd

Cgroup Version: 1

WARNING: No swap limit support

[2] 节点互信

需求:三台Linux主机,配置登录用户的互信

1.各节点ssh-keygen生成RSA密钥和公钥

ssh-keygen -q -t rsa -N "" -f ~/.ssh/id_rsa

2.将所有的公钥文件汇总到一个总的授权key文件中

在114.67.107.240执行汇总:

ssh 114.67.107.240 cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

ssh 114.67.110.126 cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

ssh 114.67.107.226 cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

出于安全性考虑,将这个授权key文件赋予600权限:

chmod 600 ~/.ssh/authorized_keys

3.将这个包含了所有互信机器认证key的认证文件,分发到各个机器中去

scp ~/.ssh/authorized_keys 114.67.110.126:~/.ssh/

scp ~/.ssh/authorized_keys 114.67.107.226:~/.ssh/

4.验证互信,各节点执行下面命令,能不输入密码显示时间,配置成功

ssh 114.67.107.240 date;ssh 114.67.110.126 date;ssh 114.67.107.226 date;

[3] HOST文件解析

修改所有的服务器的HOST文件解析:

vim /etc/hosts

# 增加以下信息:

114.67.107.240 k8s-master

114.67.110.126 k8s-node1

114.67.107.226 k8s-node2

[4] flannet网络部署

参考“2.1.3.3.2 flannel网络部署”章节完成。

[5] 节点加入集群

在slave节点上执行以下命令即可加入集群:

kubeadm join 114.67.107.240:6443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:99ea8c7fbd529eafe466f7b5ac403b896c700746a2d16178c5748927f04680db

成功加入:

# kubeadm join 114.67.107.240:6443 --token abcdef.0123456789abcdef \

> --discovery-token-ca-cert-hash sha256:99ea8c7fbd529eafe466f7b5ac403b896c700746a2d16178c5748927f04680db

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

在master上输入查看:

# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready control-plane,master 8h v1.20.5

k8s-node NotReady <none> 170m v1.20.5

<1> 如果出现错误提示:

# kubeadm join 172.19.23.53:6443 --token abcdef.0123456789abcdef \

> --discovery-token-ca-cert-hash \

> sha256:7482c9bc27c43e0247c4428c1f6b859716ef335270d1be7ef90352a4694a340d

[preflight] Running pre-flight checks

[WARNING SystemVerification]: this Docker version is not on the list of validated versions: 20.10.2. Latest validated version: 19.03

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR FileAvailable--etc-kubernetes-kubelet.conf]: /etc/kubernetes/kubelet.conf already exists

[ERROR FileAvailable--etc-kubernetes-bootstrap-kubelet.conf]: /etc/kubernetes/bootstrap-kubelet.conf already exists

[ERROR Port-10250]: Port 10250 is in use

[ERROR FileAvailable--etc-kubernetes-pki-ca.crt]: /etc/kubernetes/pki/ca.crt already exists

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

To see the stack trace of this error execute with --v=5 or higher

原因是:一些配置文件与服务已经存在

解决方案:

#重置kubeadm

kubeadm reset

<2> 默认token的有效期为24小时,当过期之后,该token就不可用了。此时可以在master重新生成token:

kubeadm token generate

kubeadm token create <generated-token> --print-join-command --ttl=0

设置–ttl=0代表永不过期

<3> 问题:/proc/sys/net/bridge/bridge-nf-call-iptables does not exist

# kubeadm join 114.67.107.240:6443 --token abcdef.0123456789abcdef \

> --discovery-token-ca-cert-hash sha256:a4ba1051211c8cd99111d804fe41b1d3b564a8545b06e126145b506fc9a25b4f

[preflight] Running pre-flight checks

error execution phase preflight: [preflight] Some fatal errors occurred:

[ERROR FileContent--proc-sys-net-bridge-bridge-nf-call-iptables]: /proc/sys/net/bridge/bridge-nf-call-iptables does not exist

[preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...`

To see the stack trace of this error execute with --v=5 or higher

解决办法:

modprobe br_netfilter

echo 1 > /proc/sys/net/bridge/bridge-nf-call-iptables

echo 1 > /proc/sys/net/ipv4/ip_forward

<4> 问题: Unable to update cni config: no networks found in /etc/cni/net.d

node节点查看kubectrl日志信息,发现以下问题:

root@k8s-node2:/data/k8s# systemctl status kubelet

● kubelet.service - kubelet: The Kubernetes Node Agent

Loaded: loaded (/lib/systemd/system/kubelet.service; enabled; vendor preset: enabled)

Drop-In: /etc/systemd/system/kubelet.service.d

└─10-kubeadm.conf

Active: active (running) since Mon 2021-08-16 12:46:35 CST; 1h 39min ago

Docs:

Main PID: 27092 (kubelet)

Tasks: 14

Memory: 41.5M

CPU: 1min 18.405s

CGroup: /system.slice/kubelet.service

└─27092 /usr/bin/kubelet --bootstrap-kubeconfig=/etc/kubernetes/bootstrap-kubelet.conf --kubeconfig=/etc/kubernetes/kubelet.conf --config=/var/lib/kubelet/config.yaml --network-plugin=cni --pod-infra

Aug 16 14:26:21 k8s-node2 kubelet[27092]: W0816 14:26:21.567855 27092 cni.go:239] Unable to update cni config: no networks found in /etc/cni/net.d

Aug 16 14:26:22 k8s-node2 kubelet[27092]: E0816 14:26:22.140015 27092 remote_runtime.go:116] RunPodSandbox from runtime service failed: rpc error: code = Unknown desc = failed pulling image "k8s.gcr.io/pause:

Aug 16 14:26:22 k8s-node2 kubelet[27092]: E0816 14:26:22.140073 27092 kuberuntime_sandbox.go:70] CreatePodSandbox for pod "kube-proxy-t2d2g_kube-system(3b90986c-f095-4e8b-ada0-03fd420aea3a)" failed: rpc error

Aug 16 14:26:22 k8s-node2 kubelet[27092]: E0816 14:26:22.140089 27092 kuberuntime_manager.go:755] createPodSandbox for pod "kube-proxy-t2d2g_kube-system(3b90986c-f095-4e8b-ada0-03fd420aea3a)" failed: rpc erro

Aug 16 14:26:22 k8s-node2 kubelet[27092]: E0816 14:26:22.140141 27092 pod_workers.go:191] Error syncing pod 3b90986c-f095-4e8b-ada0-03fd420aea3a ("kube-proxy-t2d2g_kube-system(3b90986c-f095-4e8b-ada0-03fd420a

Aug 16 14:26:23 k8s-node2 kubelet[27092]: E0816 14:26:23.548016 27092 kubelet.go:2188] Container runtime network not ready: NetworkReady=false reason:NetworkPluginNotReady message:docker: network plugin is no

Aug 16 14:26:26 k8s-node2 kubelet[27092]: W0816 14:26:26.568025 27092 cni.go:239] Unable to update cni config: no networks found in /etc/cni/net.d

Aug 16 14:26:28 k8s-node2 kubelet[27092]: E0816 14:26:28.548935 27092 kubelet.go:2188] Container runtime network not ready: NetworkReady=false reason:NetworkPluginNotReady message:docker: network plugin is no

Aug 16 14:26:31 k8s-node2 kubelet[27092]: W0816 14:26:31.568274 27092 cni.go:239] Unable to update cni config: no networks found in /etc/cni/net.d

Aug 16 14:26:33 k8s-node2 kubelet[27092]: E0816 14:26:33.550208 27092 kubelet.go:2188] Container runtime network not ready: NetworkReady=false reason:NetworkPluginNotReady message:docker: network plugin is no

docker ps发现flannel并没有配置。按照2.1.3.2.2重新配置flannel网络即可。

2.1.3.3.4 配置标签

可以看到,这3个节点已经状态为Ready了,但是新加入的节点的ROLES为none.

# kubectl get nodes -o wide

NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

k8s-master Ready control-plane,master 8h v1.20.5 192.168.0.3 <none> Ubuntu 16.04.5 LTS 4.4.0-62-generic docker://18.9.7

k8s-node1 Ready <none> 10m v1.20.5 192.168.0.3 <none> Ubuntu 16.04.5 LTS 4.4.0-62-generic docker://18.9.7

k8s-node2 Ready <none> 8h v1.20.5 192.168.0.5 <none> Ubuntu 16.04.5 LTS 4.4.0-62-generic docker://18.9.7

将node1,node2的角色定义为worker。

# kubectl label nodes k8s-node1 node-role.kubernetes.io/worker=

# kubectl label nodes k8s-node2 node-role.kubernetes.io/worker=

然后查看,角色有了。

# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready control-plane,master 9h v1.20.5

k8s-node1 Ready worker 45m v1.20.5

k8s-node2 Ready worker 9h v1.20.5

2.1.3.3.5 安装Kuboard【未成功】

如果您已经有了 Kubernetes 集群,只需要一行命令即可安装 Kuboard: kubectl apply -f 然后访问您集群中任意节点的 32567 端口(, 例如),即可打开 Kuboard 界面。

$ kubectl apply -f

deployment.apps/kuboard created

service/kuboard created

serviceaccount/kuboard-user created

clusterrolebinding.rbac.authorization.k8s.io/kuboard-user created

serviceaccount/kuboard-viewer created

clusterrolebinding.rbac.authorization.k8s.io/kuboard-viewer created

用以下命令获取token:

kubectl create clusterrolebinding serviceaccounts-cluster-admin --clusterrole=cluster-admin --group=system:serviceaccounts

kubectl create serviceaccount dashboard -n default

kubectl create clusterrolebinding dashboard-admin -n default --clusterrole=cluster-admin --serviceaccount=default:dashboard

kubectl get secret $(kubectl get serviceaccount dashboard -o jsonpath="{.secrets[0].name}") -o jsonpath="{.data.token}" | base64 --decode

2.1.3.3.6 卸载集群

想要撤销kubeadm执行的操作,首先要排除节点,并确保该节点为空, 然后再将其关闭。

在Master节点上运行:

kubectl drain <node name> --delete-local-data --force --ignore-daemonsets

kubectl delete node <node name>

然后在需要移除的节点上,重置kubeadm的安装状态:

sudo kubeadm reset

如果你想重新配置集群,使用新的参数重新运行 kubeadm init 或者 kubeadm join 即可。

2.1.4 参考

(1) kubeadm部署kubernetes v1.16.3集群

(2)Ubuntu-18.04使用kubeadm安装kubernetes-1.12.0

(3)ubuntu18安装Kubernetes 1.20.5

(4)使用Kubeadm搭建Kubernetes集群

(5)《Kubernetes权威指南:从Docker到Kubernetes实践全接触(第4版)》第2章 Kubernetes安装配置指南